Art as Diagnostic Instrument, No. 3:

The Spectator’s Error

Yet, art history has consistently decided upon the virtues of a work of art through considerations completely divorced from the rationalized explanations of the artist.

—Marcel Duchamp[1]

If we know that solipsism is silly — if we know that there are other minds — how do we know?

—Daniel C. Dennett[2]

For the search for symmetry will end up obliging us to use the same concepts, or more precisely, to subsume two forms of knowledge and practice under predefined concepts.

—Yuk Hui[3]

In April 1957, Marcel Duchamp, as a “mere artist,” delivered a talk in a roundtable meeting of the American Federation of the Arts in Houston called “The Creative Act.”[4] On that occasion, he presented his famous “art coefficient” — which defines the difference between what the artist intended to realize and what actually got realized, and it is within this gap that the real artwork exists. More to the point, Duchamp recognized the spectator as a participant in the creative act: the work is completed through interpretation, which brings it into relation with the external world. For when Duchamp referred to himself as a “mere artist,” he was not just exercising his usual witticism but underscoring the artist's role as a medium — one who brings the work into being yet does not control its final meaning. The work of art exceeds the artist's intention.

How is the spectator implicated in the creative process? The answer is as obvious as it is metaphysical. When a viewer encounters a work of art, by participation he brings it out of the artist's mental interior into the physical world in which the viewer exists. When he seeks the possible meaning contained in the work, the interpretation he arrives at mirrors his own interior far more than it reveals the intention of the artist. Whatever meaning the viewer attributes to the work becomes a part of the work — despite the artist's intention — and thus brings it to completion.

Nearly seven decades have passed since Duchamp’s “art coefficient” speech. In that time, both the cultural standing and the very medium of art have reconfigured in response to technological developments — digital art, computer art, and art made by artificial intelligence. The last of these remains the most controversial, as people fervently debate whether anything produced by a machine can be called art.

This essay argues that the problem is not whether artificial intelligence can produce art, but that we continue to judge it using criteria that no longer correspond to how art is made and experienced, and what it is concerned with today. The evaluative framework at issue is the one that surfaces in reflexive dismissals of AI-generated work — the assumption that art requires intention, emotional expression, and the evidence of a "hand." Philosophy of mind and cognitive science have largely moved past these criteria, but they persist as the default shape of the wider conversation — including in much of contemporary art criticism — and can surface in anyone's reaction, regardless of background. It is this reflex — and what it reveals about the observer's attachment to a classical paradigm that no longer adequately accounts for contemporary artistic practice — that the essay addresses. The irony is that, despite successive transformations in artistic practice, our technological capabilities have advanced exponentially while our capacity to formulate adequate frameworks remains uneven: criteria abandoned elsewhere are revived when the author is a machine.

The spectator's error begins precisely at this point — not as a failure of interpretation, but as a misrecognition of structure.

The current AI-art conversation and debate are not new. Art and the machine have been co-evolving for decades. The spectator’s error had already manifested in the 1960s, without being understood as such. To understand how, it is worth looking at what drove artists toward systematic machine-like gestures in the first place. In a 2004 exhibition catalogue Beyond Geometry: Experiments in Form, 1940s-70s, writing about the middle third of the twentieth century, Miklós Peternák identifies a moment he calls “Presque rien,” describing a post-WWII condition in which the dissolution of previous paradigms — concepts of space, time, matter, and determinacy — left artists working at the threshold of nothingness; painted canvas had no reason to exist; expressive gesture had been declared obsolete; the zero point became, paradoxically, an origin.[5] The Inventionist Manifesto, published in the first issue of Arte Concreto-Invención (Buenos Aires, August 1946), as cited by Peternák, declared: "It is evident, that ‘expression’ can no longer dominate the spirit of current artistic composition, nor can a representation, or magic, or a sign. Its place has been taken by INVENTION, by pure creation." Peternák also documents that artists across continents adopted the mantle of scientists — Opalka's number paintings, Kawara's date paintings, Darboven's mathematical notations. But it is Peternák’s two-picture comparison that offers a particularly clear instance of the spectator’s error.

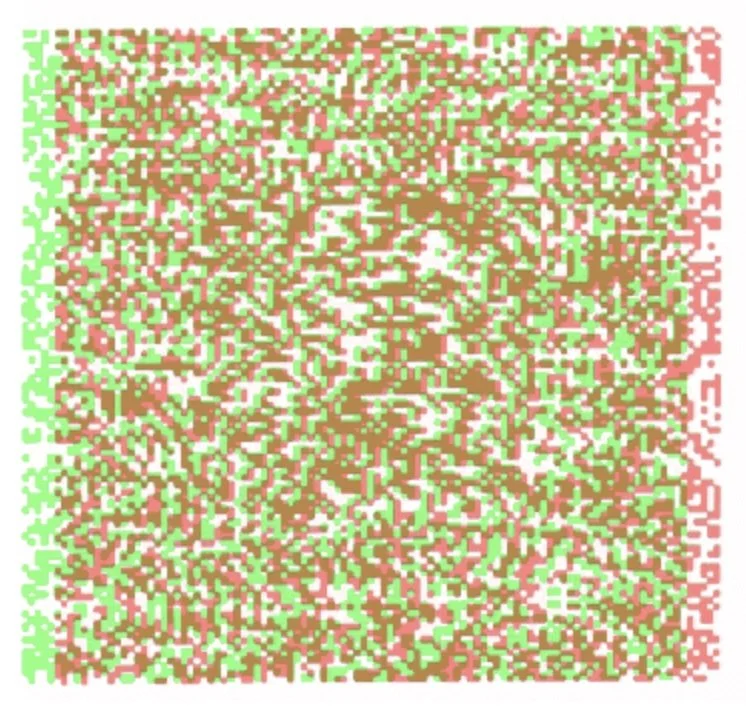

Béla Julesz, Anaglyphic Random Dot Stereogram, from Foundations of Cyclopean Perception (1971).

First developed in the late 1950s, Julesz’s stereograms demonstrate the emergence of form through binocular disparity from an otherwise structureless field.

© 1971 Bell Telephone Laboratories, Inc. Courtesy of the Béla Julesz Estate.

Here are two works both created in 1960 that produced similar visual and optical effects yet were produced for fundamentally different purposes: one artistic and executed by hand, the other scientific and produced through algorithmic procedures. When presenting François Morellet’s painting Random Distribution of 40,000 Squares Using the Odd and Even Numbers of a Telephone Directory, 50% Blue, 50% Red, Peternák describes it as showing a chaotic pattern similar to the RDS (Random-Dot Stereogram) pictures of Béla Julesz, a research scientist at Bell Laboratories, “that contain sets of dots randomly generated by computers” at the laboratories.[6] Furthering the case for a spectator’s error, he cites another case: in 1965, after the Howard Wise Gallery exhibited the scientist’s work, many newspapers splashed sensational headlines such as “Cold computer art!” and “Computers take over arts!” despite, as Julesz himself later explained in his book Dialogues on Perception that, below his exhibited stereograms was the disclaimer stating that they “were merely the results of scientific experiments, and that their creator did not regard them as pieces of art.”[7] Julesz's scientific discovery helped advance the study of human vision; the "meaning" of his stereograms can only be apprehended after a deliberate alteration in one's manner of perception. Instead of reading like valid art criticism, the newspaper comments pointed to the conditioned modes of perception on the part of the journalists, who assumed that what appeared in the gallery must either be art or an imitation of it. But what is more alarming is how easily the confusion could arise and how resistant such preconceptions were to being challenged.

a question closer to the one this essay poses — not what intelligence is, but how we recognize it, and what our recognition reveals about us.

If a scientific image requires that the spectator adjust how he sees before anything becomes available, then consciousness in the act of looking is not a fixed state but a variable one. What, then, is a conscious gaze where visual art is concerned? In art, the question becomes entangled with assumptions about intention and interiority — assumptions that may themselves be the problem rather than the answer. How can we be sure “consciousness” is at all required to make good or bad art? Daniel Dennett would say we are asking the wrong question entirely, and the reason lies in his "intentional stance" — a default cognitive behavior describing the tendency to interpret other entities by projecting onto them the structure of beliefs, desires, and rational agency that we use to navigate our own mental lives.[8] A chess computer "wants" to protect its queen. A thermostat "believes" the room is too cold. These attributions describe what the observer's mind does when confronted with behavior it needs to make legible. Dennett named this pattern of cognition to show that we cannot help but project our own framework of understanding onto whatever we observe. He expanded this in his "Tower of Generate-and-Test" — a hierarchy of cognitive systems ranging from organisms hardwired by natural selection to those capable of importing cultural tools like language to amplify their cognition. Each level builds upon the previous; none replaces it; and crucially, there is no threshold at which genuine understanding switches on.[9] The tower matters here because it forecloses the question that drives most AI-art skepticism: the question of whether a system "really" understands what it produces. For Dennett, that question assumes a switch — a threshold at which mere processing becomes genuine comprehension. The tower shows why no such switch exists. At every level of the hierarchy, from the bacterium navigating a chemical gradient to the human navigating a philosophical argument, what is happening is more complex than "mere" mechanism and less mysterious than "genuine" understanding. The difference between levels is architectural, not ontological — a difference in how cognition is organized, not a difference between cognition and its absence. To insist that a machine's production cannot be art because the machine "doesn't really understand" is to appeal to a threshold that biological cognition itself does not cross.The question "does it really think?" assumes a boundary that Dennett spent his career showing does not exist — not in artificial intelligence, not even among biological minds.

The case against a fixed boundary between thinking and processing finds further support beyond philosophy. In What Is Intelligence? Lessons from AI About Evolution, Computing, and Minds, Blaise Agüera y Arcas argues that intelligence, whether biological or artificial, is fundamentally an act of prediction: any system that models its environment and anticipates what comes next is, in a meaningful sense, intelligent. But the book also addresses a question closer to the one this essay poses — not what intelligence is, but how we recognize it, and what our recognition reveals about us.

On this point, he recounts Fabre's observation of the Sphex wasp, which Hofstadter later termed 'sphexish': a creature whose elaborate nesting behavior looks intelligent until one discovers it can be "hacked" into repeating the same sequence indefinitely. The wasp appears agential, then mechanical, depending on what the observer knows. He goes on to point out that "the difference is in our own minds, not that of the wasp."[10] If the Sphex parable shows that we project intelligence onto what we observe based on our own prior assumptions, it also reveals a more fundamental problem: we cannot verify what any system — human or artificial — actually “knows” at all, whether its knowing, if it exists, resembles our own. Agüera y Arcas explores this point through Frank Jackson's "Mary's Room" thought experiment: a scientist who knows everything about the physics of color but has lived entirely in a black-and-white environment. When she encounters red for the first time, can we say she experiences it as others do? This is not a question that can be answered objectively. Likewise, if multimodal AI systems learn correlations between sensory descriptions across modalities — linking how red is described in language to how it functions in images and in real life — can we say they do or do not "know" what red looks like? [11]

Long before contemporary debates around artificial intelligence, similar conclusions about qualia had already been reached. Wittgenstein, for example, found the question malformed at the root. He wrote:

The essential thing about private experience is really not that each person possesses his own exemplar, but that nobody knows whether other people also have this or something else. The assumption would thus be possible — though unverifiable — that one section of mankind had one sensation of red and another section another.[12]

The passage is from Wittgenstein's critique of private language — the argument that a word can only mean something if its application can in principle be checked against a shared standard. "Red" means something because we can, in practice, verify its use against the uses of others. But the experience of red — the qualia, what it is like to see this particular color — cannot be checked against anything external. The private sensation, whatever it is, plays no role in what the word means — it drops out of the concept entirely, because meaning is constituted by use, not by whatever interior state accompanies it. Wittgenstein's move is not to deny that you have an inner life, but to show that what you cannot check, you cannot use as the ground of a shared concept.

The implication for the AI-art debate is direct. When critics demand that a work of art exhibit "genuine" intention or authentic inner experience as a condition of its validity, they are invoking precisely the kind of criterion Wittgenstein showed cannot be applied. Not because machines certainly lack inner experience, but because inner experience — in any subject, human or artificial — is structurally unavailable to external verification. The demand reveals not a coherent critical standard but a need for reassurance: the reassurance that something is on the other side of the work, something like oneself. The question of qualia, like the question of authentic artistic intention, tells us more about the one who insists on it than about the object under examination.

Bogna Konior, in a recent conversation with Axis of Culture, presses this further from a different theoretical entry point — and arrives at a more unsettling consequence. Reflecting on the Turing test and its legacy, she notes that such tests do not measure intelligence so much as reveal what the evaluator takes to be evidence of intelligence. The assumption that conversational fluency signals thought is not a neutral criterion; it is a culturally and historically conditioned expectation. Meanwhile she proposes an inversion: "not can a machine talk like a human, but would an intelligent machine choose to reveal itself through communication at all?"[13] The question reframes our problem. If we cannot verify whether another mind experiences what we experience, Konior suggests that an intelligent system operating under conditions of structural uncertainty would have no reason to submit to verification in the first place. In her book The Dark Forest Theory of the Internet, she notes that when a computer fails to perform a task, we conclude it is an engineering problem; rarely do we suspect that it chose not to perform.[14] The evaluative framework determines in advance what counts as intelligence and what counts as malfunction — and that determination is made before the encounter begins. This is the spectator's error transposed from the art gallery to the laboratory: the observer's criteria are not a neutral instrument of measurement but a self-portrait disguised as a diagnostic.

The consequence is not a resolution but a recursion: the effort to locate the origin of our evaluative criteria returns us to the recognition that no position stands fully outside them.

Locating the origin of the very frameworks through which these questions arise does not resolve the question of artificial intelligence and art. But it does bring us to ask whether the framework itself is universal, or whether it asymmetrically favors one cultural condition over another. If Dennett and Agüera y Arcas have shown that intelligence cannot be verified as an internal property, and Konior that the criteria by which it is recognized are already conditioned in advance, Yuk Hui marks a final turn in this inquiry. He extends the problem further by asking how these criteria themselves come into being. In The Question Concerning Technology in China, Hui’s concept of cosmotechnics can be understood as situating technics, knowledge, and ontology within historically specific cosmologies, thereby displacing the assumption of a universal framework of judgment.[15]

Yet the movement of his argument on art also exposes its own limit. When turning to the classical landscape painting, shanshui hua [山水畫] to articulate a non-Western relation between world and technics, he reconstructs them within a philosophical apparatus that reintroduces the very asymmetries he seeks to undo. In Art and Cosmotechnics, Hui entertains the hypothesis that machines may one day appropriate the styles of master painters such as Dong Yuan, Wang Wei, Ma Yuan, and Shitao, rendering machine and painter indistinguishable.[16] The specifics of his argument reveal how: Shanshui, or landscape — as a category of literati painting [文人畫] versus for example, court paintings or paintings of people and animals [人物畫] — is more than a visual style to be replicated. It was a practice of scholar-officials in exile, executed on scrolls that integrated painting, poetry, calligraphy, and signature seals and that were circulated among peers who inscribed their own impressions on the same surface — closer to correspondence within a learned community than to an image made for exhibition. To frame the question in terms of visual indistinguishability is to have already subsumed this practice under the Western art-historical category of "painting as image." In this way, what appears as an attempt to move beyond a given framework reveals itself instead as a displacement within it. The consequence is not a resolution but a recursion: the effort to locate the origin of our evaluative criteria returns us to the recognition that no position stands fully outside them.

Consciousness in the act of looking is not a fixed state but a variable one.

The framework does not stand apart from the encounter; it constitutes it. The spectator’s error begins precisely at this point — not as a failure of interpretation, but as a misrecognition of structure. The framework precedes the encounter and determines what the encounter can become. The debate around artificial intelligence makes this condition visible because it unfolds within a domain whose criteria have not yet stabilized. Every new medium passes through such a phase: photography judged against painting, cinema against theater, digital film against analog. Each time, the new is evaluated through inherited criteria; each time, those criteria prove insufficient. The task is not to replace one framework with another presumed to be neutral, but to recognize that all frameworks — including those we must inevitably construct — are historically and structurally conditioned. To see this is not necessarily to step outside the framework, but to understand that no such outside is available. Judgment does not disappear; it is displaced. In this sense, the problem of AI art does not just call for a new theory of art, but for a reorientation of the spectator: from one who seeks to verify what is there, to one who recognizes that what is seen is already structured by the conditions of seeing.

[1] Marcel Duchamp, “The Creative Act,” in The Writings of Marcel Duchamp, ed. Michel Sanouillet and Elmer Peterson (New York: Da Capo Press, 1989), 139.

[2] Daniel C. Dennett, Kinds of Minds: Toward an Understanding of Consciousness (New York: Basic Books, 1996), 2.

[3] Yuk Hui, The Question Concerning Technology in China: An Essay in Cosmotechnics, 3rd ed. (Falmouth, UK: Urbanomic, 2016), 54.

[4] Marcel Duchamp, “The Creative Act,” 139.

[5] Miklós Peternák, “Art, Research, Experiment: Scientific Methods and Systematic Concepts” in Beyond Geometry: Experiments in Form, 1940s–1970s, ed. Lynn Zelevansky (Los Angeles: Los Angeles County Museum of Art; Cambridge, MA: MIT Press, 2004), 92.

[6]Ibid., 103.

[7]Ibid., 105.

[8] Daniel C. Dennett, Kinds of Minds: Toward an Understanding of Consciousness (New York: Basic Books, 1996), 27.

[9]Ibid., 81-93.

[10] Blaise Agüera y Arcas, What Is Intelligence? Lessons from AI About Evolution, Computing, and Minds (Cambridge, MA: MIT Press, 2025), 244-6.

[11]Ibid., 434–37.

[12] Ludwig Wittgenstein, Philosophical Investigations, trans. G. E. M. Anscombe (Oxford: Basil Blackwell & Mott, 1958), §272, 95.

[13] Bogna Konior, interview by Chennie Huang, "Uncertainty as a Condition of Intelligence," Axis of Culture, March 2026, https://www.axisculture.org/bogna-konior.

[14] Bogna Konior, The Dark Forest Theory of the Internet (Cambridge: Polity Press, 2026), 48.

[15] Yuk Hui, The Question Concerning Technology in China: An Essay in Cosmotechnics, 3rd ed. (Falmouth: Urbanomic, 2022), 19.

[16] Yuk Hui, Art and Cosmotechnics (Minneapolis: University of Minnesota Press, 2021), 254.

Essay No. 1 in the series examines how museum exhibitions organized by luxury brands convert cultural authority into economic capital, using Cartier’s institutional collaborations as a case study.

Essay No. 2 deals with how art circulates within contemporary economic and institutional systems, asking whether the separation between culture and commerce was ever historically pure.Written by Chennie Huang

The art world constitutes its own economic and social ecosystem. "Art as Diagnostic Instrument" is a new essay series that examines how art renders societal structures legible — even as it participates in the erosion of the very values it once helped to generate.